Incrementality Testing: Measure the True Lift of Your Marketing Campaigns

Table of Contents

What Is Incrementality Testing

This incrementality testing guide explains how to measure the true causal impact of your marketing efforts. Incrementality testing answers a deceptively simple question: would this conversion have happened anyway, even without the marketing touchpoint?

Most marketing measurement—clicks, conversions, return on ad spend—tells you what happened after someone saw your ad. It does not tell you whether the ad caused the outcome. A customer who searched for your brand name and clicked your branded search ad was probably going to visit your site regardless. The ad captured the conversion but did not create it.

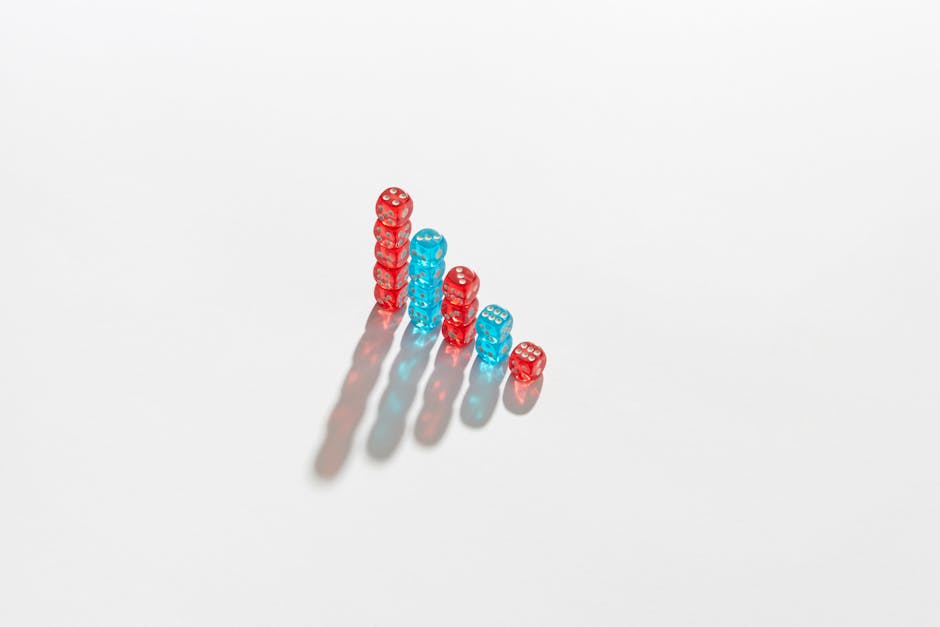

Incrementality testing isolates the causal effect by comparing a group exposed to your marketing against a control group that was not exposed. The difference in outcomes between the two groups is the incremental lift—the conversions your marketing truly generated.

Why Incrementality Matters More Than Attribution

Attribution models distribute credit across touchpoints, but they cannot distinguish between correlation and causation. A channel can appear highly effective in attribution analysis simply because it sits at the end of the funnel and captures existing demand.

Consider this example. A Singapore e-commerce brand runs retargeting ads to people who visited product pages. Attribution shows these ads generate a 10x return on ad spend. But many of those retargeted customers would have returned and purchased anyway—they were already interested. The true incremental return might be 3x, not 10x.

Without incrementality testing, you might allocate excessive budget to retargeting while under-investing in channels that genuinely create new demand. Over time, this misallocation degrades growth because the top of the funnel shrinks.

Incrementality testing complements marketing attribution models by validating whether the credit assigned by your attribution model reflects actual causal impact. The two methods together give you the most complete picture of marketing effectiveness.

How Incrementality Testing Works

The core methodology is borrowed from randomised controlled trials in science. You split your audience into two groups.

Test group: Exposed to your marketing campaign as normal.

Control group (holdout): Not exposed to the campaign. Depending on the channel, the control group might see no ad, a public service announcement or a generic brand ad that is unrelated to the campaign being tested.

After a defined test period, compare the conversion rates of both groups. The difference is the incremental lift. If the test group converts at 4 percent and the control group at 3 percent, the incremental lift is 1 percentage point—meaning 25 percent of the conversions in the test group were truly incremental.

For the test to be valid, the two groups must be equivalent in all ways except exposure to the campaign. This means random assignment, sufficient sample size and consistent timing. The same rigour that applies to growth experiments applies here.

Types of Incrementality Tests

Different channels and scenarios call for different test designs.

Ghost ads (PSA tests): Instead of showing the control group nothing, show them a public service announcement or unrelated ad. This ensures both groups have the same ad experience, isolating the effect of your specific creative and message. Meta supports ghost ad tests through its conversion lift study tool.

Geo-based holdout tests: Divide geographic regions into test and control markets. Run the campaign in test markets and withhold it from control markets. Compare performance across regions. This is useful for campaigns that cannot easily randomise at the user level, such as TV, outdoor or print advertising.

Matched market tests: Similar to geo-based tests but with additional statistical matching to ensure test and control markets are comparable in demographics, economics and baseline marketing performance. This improves validity, especially in diverse markets like Singapore and Southeast Asia.

On/off tests: Pause a campaign entirely for a defined period and compare performance to the period when it was active. This is the simplest form of incrementality testing but is less rigorous because external factors—seasonality, competitor activity—may change between periods.

Budget-based tests: Instead of a complete holdout, reduce spend in the control group by 50 percent and compare the change in conversions. If halving spend reduces conversions by less than 50 percent, some of those conversions were not truly incremental. This design is less disruptive than a full holdout and works well for Google Ads campaigns.

Designing Your First Incrementality Test

Follow these steps to design a rigorous incrementality test for your Singapore marketing campaigns.

Step 1—Choose the channel. Start with the channel that receives the largest share of your budget or where you suspect the highest cannibalization of organic demand. Branded search and retargeting are common starting points because they are most prone to claiming credit for organic conversions.

Step 2—Define the success metric. What conversion event will you measure? Purchases, sign-ups, leads or revenue? Choose a single primary metric to keep the analysis clean. Track this through your marketing dashboards.

Step 3—Determine sample size. Use a sample size calculator to estimate how many users you need in each group to detect a meaningful lift with statistical significance. Smaller expected lifts require larger sample sizes.

Step 4—Set the test duration. Run the test long enough to collect sufficient data and account for weekly variation. Two to four weeks is typical for digital campaigns. Avoid running tests during atypical periods like Chinese New Year, National Day or year-end sales.

Step 5—Randomise properly. Use platform-level randomisation where available—Meta’s conversion lift tool and Google’s Ads experiment feature handle randomisation automatically. For geo-based tests, select markets that are demographically similar.

Step 6—Minimise contamination. Ensure the control group is not accidentally exposed to the campaign through other channels. If you are testing Facebook ads, make sure the control group is not seeing the same message through email or social media marketing on other platforms.

Analysing and Acting on Results

After the test concludes, analyse the results with care. Here is how to interpret and act on your findings.

Calculate incremental lift: Subtract the control group conversion rate from the test group conversion rate. Divide by the control group rate to express lift as a percentage. For example: (4 percent minus 3 percent) / 3 percent = 33 percent incremental lift.

Check statistical significance: Use a chi-squared test or a conversion lift calculator to verify that the observed difference is unlikely to be due to chance. Aim for 90 to 95 percent confidence.

Calculate incremental cost per conversion: Divide the campaign cost by the number of incremental conversions (not total conversions). This metric reveals the true cost efficiency of the channel. It is often two to five times higher than the platform-reported cost per conversion.

Compare to attribution data: How does the incremental lift compare to what your attribution model reported? If attribution says a channel drove 500 conversions but incrementality shows only 200 were incremental, you have a 60 percent over-attribution problem. Adjust your budget allocation accordingly.

Make budget decisions: If a channel shows high incrementality, consider increasing investment. If it shows low incrementality, reduce spend or reallocate to channels with higher true impact. Feed these insights into your broader digital marketing strategy.

Document all test designs, results and decisions in your experiment log. Over time, this creates an institutional knowledge base about true channel effectiveness that no competitor can replicate.

Considerations for the Singapore Market

Running incrementality tests in Singapore presents unique opportunities and constraints.

Small market size: Singapore’s compact audience means reaching statistical significance can take longer. Plan for extended test durations or accept slightly lower confidence thresholds for directional insights.

Geo-testing limitations: Singapore is a city-state with no distinct sub-markets for geo-based holdout tests. Use user-level randomisation instead, or consider testing across Singapore and Malaysia if your business serves both markets.

Multicultural audience: Different ethnic groups in Singapore may respond differently to marketing. Ensure your test and control groups are balanced across demographics to avoid skewed results.

High digital penetration: Singapore’s near-universal internet access means digital campaigns reach a large share of the population quickly, which is advantageous for accumulating sample sizes for online channel tests.

Cross-channel exposure: In a small market, consumers encounter multiple touchpoints rapidly. Control group contamination is a real risk—monitor it throughout the test period and account for it in your analysis. Strong SEO foundations ensure your organic presence does not confound results when testing paid channels.

Frequently Asked Questions

What is the difference between A/B testing and incrementality testing?

A/B testing compares two versions of a marketing element—such as ad creative or landing page—to see which performs better. Incrementality testing compares marketing exposure to no exposure to measure whether the marketing caused conversions that would not have happened otherwise.

How much budget should we allocate to incrementality testing?

Expect to withhold 10 to 20 percent of your campaign budget for the control group during the test period. This is the cost of learning—the insights you gain will save far more than the revenue temporarily lost from the holdout.

Can we run incrementality tests on organic channels?

It is difficult because you cannot easily control who sees your organic content. However, you can use techniques like pausing content marketing on specific topics or regions and comparing performance to areas where content continues. These tests are less rigorous but still informative.

How often should we run incrementality tests?

Test your largest channels annually at minimum. If you are making significant changes to targeting, creative or budget, retest sooner. Market conditions change, and a channel’s incremental value can shift over time.

What if an incrementality test shows zero lift?

This means the channel is not driving conversions that would not have happened organically. Consider reducing or pausing spend on that channel and reallocating to channels with proven incrementality. Alternatively, test different creative, audiences or offers before abandoning the channel entirely.

Is incrementality testing only for large companies?

Not necessarily, but it does require sufficient conversion volume for statistical significance. Singapore SMEs spending at least $5,000 per month on a single channel can run meaningful incrementality tests. Below that threshold, the results may be directional but not statistically robust.

How does incrementality testing relate to marketing mix modelling?

Marketing mix modelling (MMM) uses aggregate historical data to estimate channel contributions. Incrementality testing uses controlled experiments to measure causal impact. They are complementary—MMM provides a broad view, while incrementality testing validates specific channels.

Can we measure the incrementality of branding campaigns?

Yes, but use leading indicators rather than direct conversions. Measure brand search volume, direct traffic, aided recall or brand consideration in the test versus control groups. Branding incrementality is real but slower to manifest than performance channel incrementality.