Quality Control for Programmatic SEO: Avoid Thin Content Penalties

The Quality Challenge in Programmatic SEO

Programmatic SEO creates a fundamental tension: the strategy’s power comes from scale, but scale is the enemy of quality. When you generate 10,000 pages from templates and data, every template weakness, data gap and logic error is replicated 10,000 times. A single overlooked quality issue that would be inconsequential on a manually crafted page becomes a site-wide problem that can trigger algorithmic suppression across your entire domain.

Google’s quality systems — the helpful content system, spam detection algorithms and core ranking updates — have grown increasingly sophisticated at identifying programmatic content that fails to provide genuine value. The helpful content system explicitly evaluates whether content was created primarily to attract search traffic rather than to help users. Programmatic pages that are transparently template-driven, data-thin or repetitive across thousands of URLs send precisely the signals these systems are designed to detect.

Programmatic SEO quality is not an afterthought or a polishing step. It must be designed into the architecture from the initial planning stage. The most successful programmatic SEO operations treat quality control as a core engineering discipline — with defined standards, automated enforcement, monitoring systems and continuous improvement processes. Businesses investing in SEO services at scale must understand that quality infrastructure is as important as the content generation pipeline itself.

The Cost of Quality Failures

When programmatic SEO quality fails, the consequences are severe and often delayed. Google may take weeks or months to fully evaluate a large batch of new programmatic pages. During that period, initial indexation and early traffic can create a false sense of success. Then, as quality signals accumulate and algorithmic re-evaluation occurs, traffic drops precipitously — often affecting not just the programmatic pages but the entire domain’s ranking performance through the helpful content system’s site-wide classifier.

Recovery from a site-wide quality downgrade is slow and expensive. It typically requires significant content remediation — improving, consolidating or removing thousands of pages — followed by months of waiting for Google’s systems to reassess the site. For Singapore businesses dependent on organic traffic, this delay can represent substantial revenue loss. Prevention is dramatically more cost-effective than recovery.

What Triggers Thin Content Penalties at Scale

Understanding the specific patterns that trigger quality algorithm actions allows you to design systems that avoid them. While Google does not publish exact thresholds, analysis of affected sites reveals consistent patterns.

Template Dominance Over Unique Content

The most common thin content trigger in programmatic SEO is an unfavourable ratio of template (shared) content to unique (per-page) content. When 80 per cent of a page’s text is identical across thousands of URLs and only the business name, location or a few data points change, each page offers insufficient unique value to justify independent indexation. Google’s systems detect this pattern through content similarity analysis — effectively comparing pages within your own site to assess how much genuinely new information each page contributes.

The threshold is not fixed, but as a working guideline, aim for at least 40 to 50 per cent of visible text content to be unique per page. This does not mean simply spinning the same sentences with synonym substitution — Google’s natural language understanding detects paraphrasing. It means structurally different content that addresses the specific context of each page’s entity.

Data Gaps and Empty Fields

Template-driven pages with unfilled data fields produce broken, thin pages that damage site quality. A property listing template designed for 15 data fields that publishes a page when only 3 are populated creates a mostly empty page. At scale, hundreds of these incomplete pages dilute your site’s overall quality assessment. Programmatic systems must enforce minimum data completeness thresholds before any page is published.

Low Information Gain Per Page

Google’s information gain patent describes a system that evaluates how much new information a page provides relative to other pages ranking for the same query. If your programmatic pages contain the same information available on the entities’ own websites, on Google Maps or on established reference sites, they provide zero information gain and have no ranking justification. Each page must contain at least one substantive information element that is not readily available elsewhere.

Excessive Boilerplate

Sidebars, footers, navigation menus and promotional blocks that repeat on every page contribute to the template dominance problem. While search engines are generally sophisticated enough to identify and discount site-wide boilerplate, excessively heavy chrome — where the peripheral content outweighs the main content — can push pages below the quality threshold. Minimise per-page boilerplate for programmatic pages, reserving screen and HTML space for unique content.

Unique Value Injection Strategies

The core quality strategy for programmatic SEO is injecting unique value into every page through multiple channels. No single technique is sufficient — effective quality relies on layering multiple value sources.

Proprietary Data

The strongest form of unique value is data that no one else has. If your programmatic pages include pricing data from your own research, performance metrics from your own tracking, or ratings from your own evaluation framework, each page automatically contains information unavailable elsewhere. A directory of Singapore co-working spaces with proprietary noise-level measurements, desk availability data and real-time pricing creates pages that cannot be replicated by competitors using publicly available information.

Calculated and Derived Metrics

Even when your source data is publicly available, calculated metrics add unique value. Composite scores, rankings, percentile comparisons, trend analyses and statistical summaries transform raw data into insights. A programmatic page about a Singapore neighbourhood that calculates average property price per square foot, year-over-year price change, rental yield estimates and comparison to adjacent neighbourhoods creates unique analytical value from otherwise standard data.

User-Generated Content

Reviews, ratings, questions, comments and user-submitted data add unique, continuously refreshing content to programmatic pages. This is the strategy that powers TripAdvisor, Glassdoor and similar platforms — the programmatic template provides structure, while users provide the unique content that differentiates each page. Building a UGC engine requires significant upfront investment in community building but creates a self-sustaining quality advantage once established.

AI-Enhanced Descriptive Content

Language models can generate unique descriptive content for each page, transforming data points into natural language narratives. A programmatic page about a restaurant can use AI to generate a unique description based on its cuisine type, location, price range, notable dishes and available reviews — producing a paragraph that reads naturally and differs substantively from every other restaurant page. The critical requirement is validation: AI-generated content must be checked against source data for accuracy and reviewed for quality before publication.

Cross-Entity Comparisons and Context

Adding contextual comparisons creates unique content algorithmically. Each programmatic page can include dynamically generated sections comparing the entity to similar entities: “How [Business X] compares to other [category] providers in [location]” with specific data points. This comparative content is necessarily unique to each page because the comparison set changes. It also addresses a genuine user need — prospective customers want to understand relative positioning, not just absolute information.

Building Automated Quality Check Systems

Quality at scale demands automation. Manual review of 10,000 pages is impractical; automated quality gates that prevent substandard pages from reaching production are essential.

Pre-Publication Quality Gates

Implement quality checks that run automatically before any page is published. These gates should block publication if quality criteria are not met, not merely flag issues for later review. Essential pre-publication checks include:

- Word count validation: Reject pages below your minimum unique content threshold. Set this threshold based on competitive analysis — if ranking pages in your niche average 800 words of unique content, your minimum should be comparable.

- Data completeness scoring: Assign weights to each data field and require a minimum composite score for publication. Critical fields (name, primary category, location) should be mandatory; supplementary fields (hours, pricing, certifications) should contribute to the score.

- Duplicate content detection: Compare each new page against existing pages using similarity algorithms (simhash, cosine similarity on TF-IDF vectors). Reject pages that exceed a similarity threshold — typically 70 to 80 per cent text similarity with any existing page.

- Template rendering validation: Verify that all template variables resolved correctly. Empty variables, placeholder text and rendering errors should block publication.

- Technical SEO compliance: Validate HTML structure, meta tags, canonical URLs, schema markup and internal links. Use automated tools like Lighthouse CI or custom scripts to enforce technical standards.

Post-Publication Monitoring

Quality checks do not end at publication. Implement ongoing monitoring that catches quality degradation over time:

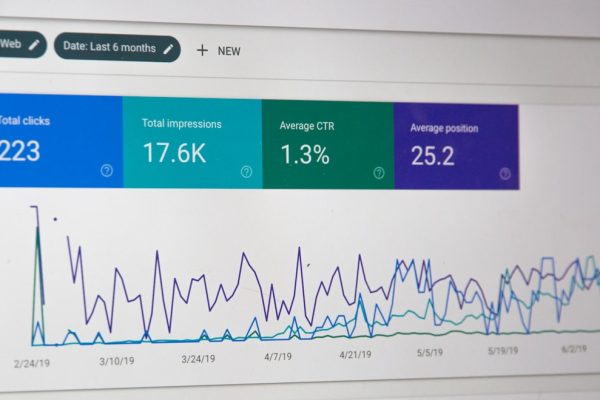

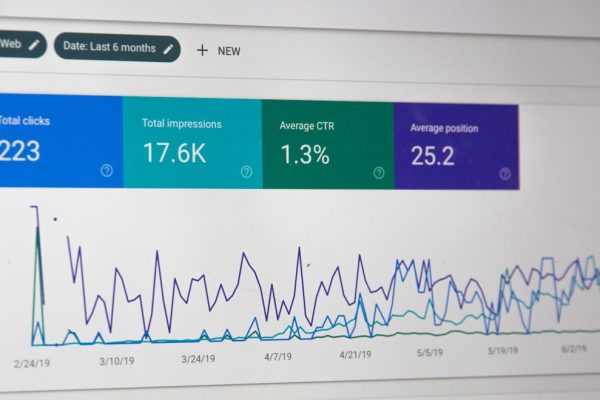

- Indexation monitoring: Track the ratio of published pages to indexed pages in Google Search Console. A declining indexation rate indicates that Google is choosing not to index pages it considers low-quality.

- Crawl pattern analysis: Monitor which pages Google crawls most and least frequently. Pages that Google stops crawling may have been assessed as low-value.

- User engagement tracking: Monitor bounce rates, time on page and pages per session for programmatic page categories. Consistently poor engagement metrics across a category suggest quality issues.

- Search Console performance alerts: Set up automated alerts for significant drops in impressions or clicks for programmatic page groups.

Quality Scoring Models

Develop a composite quality score for each programmatic page that combines multiple quality signals into a single metric. This score should incorporate content length, uniqueness ratio, data completeness, user engagement metrics and indexation status. Pages falling below the quality threshold should be flagged for improvement or removal. Track the distribution of quality scores across your portfolio — the goal is to continuously shift the distribution upward.

Content Differentiation Across Pages

Creating genuine differentiation across thousands of pages generated from the same template requires deliberate architectural decisions. The template itself must be designed to produce varied output, not just substituted output.

Conditional Content Blocks

Design templates with conditional sections that appear or disappear based on data attributes. A programmatic page for a business with reviews should display a review summary section; a page without reviews should display a “request first review” prompt instead. A business with pricing data gets a pricing comparison section; one without pricing gets a “request quote” functionality. These conditional blocks create structural variation that makes pages feel less templated.

Data-Driven Content Variation

Use data values to drive narrative variation, not just variable substitution. Instead of “[Business Name] is located in [Area],” use conditional logic: if the business is in the CBD, discuss accessibility from MRT stations and proximity to business hubs; if in a heartland location, discuss parking availability and neighbourhood character. The same template field (location) produces substantively different content depending on the value, creating genuine per-page uniqueness.

Dynamic Relationship Content

Each page can include dynamically generated content based on the entity’s relationships to other entities in your database. “Nearby alternatives,” “frequently compared with,” “also in this category” — these sections produce unique content on every page because the relationship context differs. More importantly, they serve genuine user needs, helping visitors discover relevant alternatives and make informed decisions.

Temporal Variation

Incorporate time-sensitive data that changes regularly. “Last updated,” “recent activity,” “trending in [category]” and seasonal relevance signals add freshness that both users and search engines value. A content marketing approach that incorporates temporal elements keeps programmatic pages from becoming stale artefacts.

The Human Editorial Layer

Automation handles scale; humans handle nuance. Even the most sophisticated automated quality systems miss contextual issues that human editors catch intuitively. The human editorial layer is not a luxury — it is a quality requirement.

Statistical Sampling Protocols

Define a sampling protocol that ensures representative human review without requiring review of every page. For new template launches, review 10 to 20 per cent of generated pages before full publication. For ongoing production from established templates, review 2 to 5 per cent of new pages monthly. Stratify your sample across data categories — do not just review high-traffic pages. Quality issues often hide in edge cases and low-volume categories.

Editorial Quality Criteria

Give human reviewers specific, measurable criteria. Subjective assessments like “does this feel high-quality?” produce inconsistent results. Instead, define concrete questions: Does the page answer the implied user query? Is the unique content factually accurate? Does the page provide information not available on the entity’s own website? Are there any template rendering errors? Would you recommend this page to a colleague seeking this information? Score each criterion and track scores over time.

Feedback Loops to Engineering

Human review findings must flow back into template and system improvements. If editors consistently flag a particular section as weak, the template needs revision. If data quality issues recur in a specific category, the data pipeline needs improvement. Without this feedback loop, human review becomes a cost without a return — catching the same issues repeatedly without preventing them. Formalise the process: editors file quality issues, engineers prioritise and resolve them, and the next review cycle verifies the fix.

Subject Matter Expert Review

For programmatic content in specialist domains — medical, legal, financial — periodic review by subject matter experts is essential. These experts validate not just factual accuracy but contextual appropriateness. A programmatic page about a legal service that is technically accurate but contextually misleading could expose your business to legal liability and reputational damage. In Singapore’s regulated environment, expert review is particularly important for content touching financial services, healthcare and legal advice.

Performance Monitoring and Early Warning Systems

Quality issues in programmatic SEO often manifest gradually. A slow decline in indexation rates, a creeping increase in bounce rates or a subtle downward trend in average position — these signals are easy to miss without systematic monitoring. Early detection prevents small problems from becoming site-wide crises.

Key Quality Indicators

Monitor these metrics at the category and template level, not just site-wide:

- Indexation ratio: Percentage of published programmatic pages that Google has indexed. A healthy ratio is above 90 per cent; below 70 per cent indicates significant quality concerns.

- Crawl frequency: How often Google revisits your programmatic pages. Declining crawl frequency suggests Google is deprioritising your content.

- Impressions per indexed page: Average search impressions divided by indexed pages. This measures how actively Google serves your pages in search results — indexed but rarely shown pages may have quality issues.

- Click-through rate by template: Compare CTRs across different page templates. Templates with consistently low CTRs may have title tag or meta description issues.

- Engagement metrics by category: Bounce rate, time on page and pages per session segmented by programmatic page category. Categories with poor engagement warrant quality investigation.

Automated Alerting

Configure automated alerts for significant metric changes. A 20 per cent decline in indexed pages over a two-week period, a sudden drop in crawl frequency or an engagement metric falling below your defined threshold should trigger immediate investigation. Use tools like Google Search Console API with custom scripts, or third-party monitoring platforms that support custom alert rules. For businesses managing digital marketing at scale, these alerts are as important as uptime monitoring for your website.

Google Algorithm Update Correlation

Track Google’s confirmed and suspected algorithm updates against your programmatic page performance. Tools like Semrush Sensor, Moz’s algorithm tracker and industry forums provide update timelines. If your programmatic pages experience performance changes coinciding with quality-focused updates (helpful content, core updates), this strongly suggests quality issues that need addressing. Maintain a log of update dates and your corresponding performance data to identify patterns over time.

Competitive Benchmarking

Monitor how competing programmatic sites perform relative to yours. If a competitor’s programmatic pages are gaining visibility while yours are declining, analyse what differentiates their approach. Are their pages substantively richer? Do they have more user-generated content? Is their data more comprehensive? Competitive analysis provides both early warning of your own quality issues and inspiration for quality improvements.

Recovery Strategies When Quality Fails

Despite best efforts, quality failures happen. When they do, the response speed and strategy determine whether recovery takes weeks or months.

Diagnosing the Quality Issue

Before remediation, accurately diagnose the problem. Common diagnostic steps:

- Segment analysis: Identify which programmatic page categories are affected. If the decline is isolated to specific categories, the issue is likely content or data quality in those categories rather than a site-wide problem.

- Content audit: Compare affected pages against unaffected pages. What differs in content depth, uniqueness, data completeness and user engagement?

- Technical audit: Verify that affected pages have no technical SEO issues — rendering problems, canonical errors, noindex tags accidentally applied.

- Timeline correlation: Map the performance decline against your publishing timeline, algorithm updates and any site changes. Identifying the trigger narrows the diagnosis.

Triage: Improve, Consolidate or Remove

Not every programmatic page is worth saving. Categorise affected pages into three groups:

- Improve: Pages with adequate data that need richer content, better unique value or template improvements. These are worth the investment in remediation.

- Consolidate: Pages that are too similar to each other or too thin individually but contain useful information when combined. Merge these into fewer, stronger pages with proper redirects.

- Remove: Pages with insufficient data, no search demand and no realistic path to quality. Noindex or remove these pages entirely. Pruning low-quality pages improves the site’s overall quality score.

Staged Remediation

Do not attempt to fix thousands of pages simultaneously. Prioritise by traffic impact: remediate the highest-traffic categories first, then work through remaining categories in order of potential value. Monitor the impact of each remediation batch before proceeding to the next. This staged approach lets you validate your remediation strategy and adjust if initial efforts do not produce the expected improvements.

Communicating With Stakeholders

Quality recovery takes time — typically three to six months for Google’s systems to fully reassess a site after significant quality improvements. Set realistic expectations with stakeholders. Provide regular progress updates tracking the leading indicators (quality scores, indexation ratios, crawl patterns) while acknowledging that traffic recovery lags behind content improvements. Transparency about the timeline prevents pressure to cut corners, which risks repeating the quality failure that caused the problem.

Maintaining programmatic SEO quality is an ongoing discipline, not a one-time effort. The systems, processes and culture you build around quality determine the long-term sustainability of your programmatic content. Invest in quality infrastructure with the same seriousness you invest in content production, and your programmatic pages will deliver compounding organic traffic for years. Neglect quality, and you risk not just losing programmatic traffic but damaging your entire domain’s SEO performance.

Frequently Asked Questions

How does Google detect thin programmatic content?

Google uses multiple signals including content similarity analysis across pages within a site, information gain assessment relative to existing indexed pages, user engagement patterns and quality classifier models trained on human-rated examples. The helpful content system applies a site-wide classifier that can suppress an entire domain if a significant proportion of its content is deemed unhelpful. Programmatic sites with thousands of thin pages are particularly vulnerable because the volume of low-quality signals overwhelms positive signals from other content.

What is the minimum unique content percentage per page?

There is no published threshold, but analysis of successful programmatic sites suggests that at least 40 to 50 per cent of visible text content should be unique to each page. This includes unique descriptive content, entity-specific data presentations, reviews or user-generated content and dynamically generated comparison sections. The remaining shared content (navigation, template framework, category descriptions) should be minimised on programmatic pages.

Can I use AI to generate unique content for programmatic pages?

Yes, but with robust validation. AI-generated content adds unique text to each page, addressing the template repetition problem. However, AI content must be validated against source data for factual accuracy, checked for hallucinations and reviewed for quality. Unvalidated AI content at scale creates a different quality problem — factual inaccuracy — that can be equally damaging to user trust and search performance.

How many programmatic pages can I publish safely?

There is no fixed limit. Google does not penalise sites for having many pages — it penalises sites for having many low-quality pages. A site with 100,000 pages that each provide genuine unique value will perform well. A site with 1,000 thin pages will underperform. The constraint is your ability to maintain quality at scale, not an arbitrary page count limit. Publish only pages that meet your quality criteria, regardless of how many that produces.

What should I do if my programmatic pages are not getting indexed?

Low indexation rates typically indicate quality issues. First, verify there are no technical barriers (robots.txt blocks, noindex tags, crawl errors). Then assess content quality — are the pages substantively unique and useful? Improve content quality on a sample of non-indexed pages and monitor whether indexation improves. Also ensure strong internal linking to programmatic pages from indexed hub pages, and submit updated sitemaps. If quality improvements do not improve indexation, consider reducing your page count to only the highest-quality pages.

How often should I audit programmatic content quality?

Run automated quality checks continuously as part of your publishing pipeline. Conduct manual sampling reviews monthly for established templates and more frequently (weekly) during new template launches. Perform comprehensive audits quarterly, reviewing quality scores, indexation trends, engagement metrics and competitive positioning across all programmatic page categories. After any Google algorithm update, conduct an immediate assessment of programmatic page performance.

Should I noindex programmatic pages that do not meet quality standards?

Yes. It is better to have fewer indexed, high-quality pages than many indexed, low-quality pages. The helpful content system evaluates overall site quality, so low-quality pages harm the performance of your high-quality pages. Noindex pages that fail quality checks and either improve them to meet standards or remove them entirely. A smaller portfolio of strong pages outperforms a larger portfolio diluted by weak ones.

How do I add unique value when my data is publicly available?

Transform public data into insights: calculate derived metrics, build comparison frameworks, add editorial analysis, generate contextual narratives and incorporate user-generated content. A page about a publicly listed company that synthesises financial data into accessible analysis, compares performance against industry benchmarks and includes analyst commentary adds substantial value beyond the raw data that anyone could access from the same public sources.

What is the difference between a helpful content penalty and a spam penalty?

The helpful content system applies a site-wide quality classifier that demotes sites producing unhelpful content — it is algorithmic, automatic and affects the entire domain’s ranking ability. Spam penalties (manual actions) are issued by human reviewers for egregious violations like hidden text, cloaking or doorway pages, and target specific pages or sections. Thin programmatic content typically triggers the helpful content classifier rather than a manual spam action, though extremely low-quality doorway-style pages can trigger both.

How long does recovery from a quality-related ranking decline take?

Expect three to six months minimum after substantive quality improvements are implemented. Google’s helpful content classifier re-evaluates sites periodically, not continuously, so improvements may not be reflected until the next classifier update. During this period, focus on continuing quality improvements, monitoring leading indicators and building positive quality signals. Some sites report partial recovery within two to three months, with full recovery taking six to twelve months for severe quality issues.