NLP and SEO: How Google Understands Language and What It Means for Your Content

What Is NLP in Search

Natural language processing (NLP) is the branch of artificial intelligence concerned with enabling computers to understand, interpret and generate human language. In the context of search engines, NLP is the technology that allows Google to understand what your content means — not just what words it contains — and to match that meaning against the intent behind user queries.

Google has invested more in NLP research and deployment than arguably any other organisation in the world. Its research lab has produced foundational advances including the Transformer architecture (the “T” in BERT and GPT), BERT itself, T5, LaMDA, PaLM, and Gemini. These models are not abstract research projects. They are integrated into Google Search, processing billions of queries daily and evaluating trillions of web pages for relevance and quality.

For SEO professionals, understanding NLP is not about learning to code machine learning models. It is about understanding what these systems can and cannot detect in your content, what signals they prioritise, and how to write content that aligns with machine language understanding rather than fighting against it.

The practical implication is straightforward: content that is well-written, clearly structured, topically comprehensive, and genuinely authoritative is exactly what NLP models are designed to identify and reward. The era of gaming search engines with keyword tricks is decisively over. NLP-driven search rewards content quality at a level that previous algorithm generations could not achieve.

Google’s NLP Evolution: From Hummingbird to MUM

Google’s NLP capabilities have evolved through several distinct generations, each representing a significant leap in language understanding.

Hummingbird (2013): Semantic Query Processing

The Hummingbird update was Google’s first major step toward semantic search. Rather than breaking queries into individual keywords and matching them independently, Hummingbird processed the full meaning of a query as a coherent statement. “Where can I buy a good laptop near Marina Bay” was no longer decomposed into “buy” + “laptop” + “Marina Bay” but understood as a single semantic unit expressing commercial intent for a product in a specific location.

Hummingbird was significant because it changed what Google was even trying to do. Before Hummingbird, the goal was keyword matching. After Hummingbird, the goal was meaning matching.

RankBrain (2015): Machine Learning for Query Understanding

RankBrain introduced machine learning to query processing. For the first time, Google used neural networks to understand previously unseen queries by relating them to similar queries Google had already processed. RankBrain was particularly effective for long-tail queries, ambiguous queries, and conversational queries that traditional rule-based systems struggled with.

RankBrain converted queries and documents into mathematical vectors — numerical representations in a high-dimensional semantic space. Queries and documents with similar vectors were considered semantically related, even if they shared few or no keywords. This was the beginning of vector-based semantic matching in Google Search.

Neural Matching (2018): Understanding Concepts

Neural matching extended machine learning from query understanding to the broader task of matching queries to documents. While RankBrain focused on interpreting the query, neural matching focused on understanding the conceptual relationship between a query and a page’s content. Google described it as understanding “super synonyms” — recognising that a page about “why does my TV look strange” might be relevant for a query about “soap opera effect,” even though the terms barely overlap.

BERT (2019): Bidirectional Language Understanding

BERT represented the most significant leap in Google’s NLP capability. We will cover BERT in detail in the next section, but its core contribution was enabling Google to understand the nuances of how words interact in context, reading sentences bidirectionally rather than left-to-right or right-to-left.

MUM (2021): Multilingual, Multimodal Understanding

MUM is the most advanced publicly announced NLP model in Google Search. We will cover MUM in detail in its own section below.

BERT: How Transformers Changed Search

BERT (Bidirectional Encoder Representations from Transformers) is the NLP model that most fundamentally changed how Google processes both queries and content. Understanding BERT is essential for modern SEO practice.

How BERT Processes Language

BERT reads text bidirectionally — considering words both before and after a target word to understand its meaning. Previous models read text sequentially, which limited their ability to understand complex sentence structures. Consider the sentence: “The bank by the river was a good spot to fish.” A sequential model might associate “bank” with financial institutions. BERT, reading the full context bidirectionally, correctly identifies “bank” as a riverbank.

This bidirectional understanding enables BERT to parse nuance, prepositions, negations, and complex sentence structures that sequential models miss. The preposition “for” vs “to” can completely change a query’s meaning. “Tips for visiting Singapore” vs “tips to avoid in Singapore” express opposite intents despite sharing most of their words. BERT detects these distinctions.

What BERT Means for Content

BERT’s language understanding has several practical implications for content creation. First, natural language is now preferred over keyword-optimised phrasing. Writing “best digital marketing agency Singapore” (keyword-style) provides no advantage over “the best digital marketing agency in Singapore” (natural English). BERT understands both equally well, and the natural phrasing provides a better user experience.

Second, BERT can evaluate content quality at a linguistic level. Poorly written content with grammatical errors, awkward phrasing, and incoherent structure is more difficult for BERT to process and is less likely to be assessed as high quality. Well-written, clearly structured content is easier for BERT to parse and evaluate positively.

Third, BERT evaluates content at a granular level. Individual sentences, paragraphs and passages are assessed for relevance, not just the page as a whole. This means every section of your content should be substantive and on-topic. Filler content that pads word count without adding meaning is not just unhelpful for users — it is detectable by BERT as low-quality padding.

BERT and Featured Snippets

BERT has significantly improved Google’s ability to identify the specific passage on a page that best answers a query. This directly powers featured snippet selection. When Google shows a featured snippet, it has used BERT-level language understanding to identify the passage that most directly and completely answers the query.

To optimise for featured snippets in a BERT-driven search environment, structure your content so that individual sections provide clear, complete answers to specific questions. A well-written paragraph that directly addresses “what is entity SEO” is a strong featured snippet candidate for that query.

MUM and Multimodal Understanding

MUM (Multitask Unified Model) is Google’s most powerful publicly deployed NLP system, described by Google as 1,000 times more powerful than BERT. While BERT excels at understanding individual queries and passages, MUM handles complex, multi-step information needs across languages and formats.

MUM’s Key Capabilities

Multilingual understanding: MUM was trained across 75 languages simultaneously. It can transfer knowledge across languages, understanding that a high-quality resource in Chinese about a topic is relevant to an English query about the same topic. For Singapore’s multilingual market, this is particularly significant — MUM can evaluate content quality across English, Chinese, Malay, and Tamil.

Multimodal processing: MUM can understand information across text, images, and potentially other formats. While full multimodal deployment is still rolling out, MUM represents Google’s move toward understanding content holistically rather than as isolated text on a page.

Complex query decomposition: MUM can break complex queries into sub-tasks, process each sub-task, and synthesise the results. A complex query like “I’ve hiked Mount Rinjani and want to hike Mount Fuji next, what should I do differently to prepare?” requires understanding multiple entities, comparing attributes, and generating tailored advice. MUM handles this level of complexity.

MUM’s Implications for SEO

MUM raises the bar for content quality and comprehensiveness. If MUM can access and understand information across 75 languages, your content is being compared against the best resources available globally, not just in your language. This means that superficial content that might have ranked in a purely English-language evaluation is now competing against comprehensive resources from across the world.

For Singapore businesses, MUM creates both a challenge and an opportunity. The challenge is higher quality expectations. The opportunity is that Singapore’s multilingual content ecosystem — with resources in English, Chinese, Malay, and Tamil — is now part of Google’s broader understanding, and multilingual content strategies can leverage this.

MUM also makes comprehensive content marketing more important than ever. Content that addresses complex queries with genuine depth, multiple perspectives, and practical guidance is exactly what MUM is designed to surface.

Passage Ranking and Granular Content Evaluation

Passage ranking, introduced in 2021, is one of the most practically important NLP-driven changes to Google Search. It fundamentally changes how content is evaluated and surfaced.

How Passage Ranking Works

Before passage ranking, Google primarily evaluated pages as whole units. A page’s overall topic, authority, and quality determined its ranking for a query. With passage ranking, Google can identify and rank individual passages within a page independently of the page’s overall focus.

This means a long-form guide on digital marketing might rank for a specific question about email marketing open rates — not because the page targets that keyword, but because one passage within the page provides an excellent answer. Google’s NLP models identify that passage, evaluate its relevance and quality, and surface it in results.

Content Structure for Passage Ranking

Passage ranking rewards well-structured content with clearly defined sections. Each section should be a self-contained, comprehensive treatment of its subtopic. Use clear headings (H2, H3) that signal the topic of each section. Write section introductions that provide context and section bodies that deliver complete information.

Think of each major section as a potential standalone answer. If someone were to read only that section, would they get a complete, useful response to the subtopic? If yes, that section is a strong passage ranking candidate.

Passage Ranking and Long-Form Content

Passage ranking has reinvigorated the value of comprehensive long-form content. A 3,000-word guide with 8 well-structured sections effectively creates 8 passage ranking opportunities, each capable of ranking for different related queries. This multiplies the search visibility of a single page far beyond what a short, focused article could achieve.

However, passage ranking does not reward length for its own sake. It rewards depth per section. A 3,000-word article with 8 genuinely useful sections outperforms a 5,000-word article with 15 thin sections. Quality per passage, not total volume, is the signal.

NLP-Informed Content Strategy

Understanding Google’s NLP systems should directly inform how you plan, write and structure content. Here is the practical strategy.

Write for Humans, Structured for Machines

This is the fundamental principle of NLP-informed content. Google’s NLP models are trained on human language. They are designed to identify content that is genuinely useful, well-written, and authoritative. The best way to optimise for NLP is to write excellent content for human readers, then ensure it is structurally accessible for machine processing.

Structural accessibility means: clear heading hierarchies, logical section flow, explicit topic sentences at the beginning of sections and paragraphs, concise and precise language, and proper HTML semantics.

Prioritise Clarity Over Complexity

NLP models process clear, well-structured text more effectively than convoluted, jargon-heavy prose. This does not mean dumbing down your content. It means expressing complex ideas clearly. The best technical writing is simultaneously deep and accessible. Aim for content that an informed professional can read efficiently and extract clear value from each section.

For Singapore businesses writing in English, be aware that Google’s NLP models handle Singapore English well but may process heavily colloquial text less accurately. Use standard British English for formal content, reserving Singlish or colloquial terms for contexts where they add genuine value.

Address Queries Directly

NLP-driven search rewards direct answers. When your content addresses a question, provide a clear, concise answer early in the section before expanding with detail and context. This “answer first, elaborate second” pattern aligns with how BERT evaluates passage relevance and how featured snippets are selected.

A section addressing “how does BERT affect SEO” should start with a clear statement of the impact before diving into technical details. This pattern serves both human readers (who want immediate clarity) and NLP evaluation (which identifies direct answers as high-relevance signals).

Use Entities Over Pronouns Where Appropriate

While NLP models can resolve pronoun references, explicit entity mentions provide stronger signals. Instead of writing “it is important for businesses in the region,” write “entity SEO is important for businesses in Singapore.” This clarity helps NLP models identify exactly which entities your content discusses and how they relate to each other.

This does not mean eliminating pronouns entirely — that would produce unnatural, repetitive text. It means using explicit entity references at the start of sections, in key definitional statements, and in passages you want to be strong featured snippet candidates.

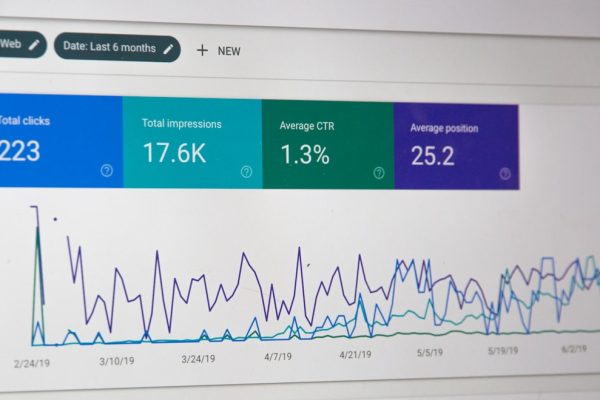

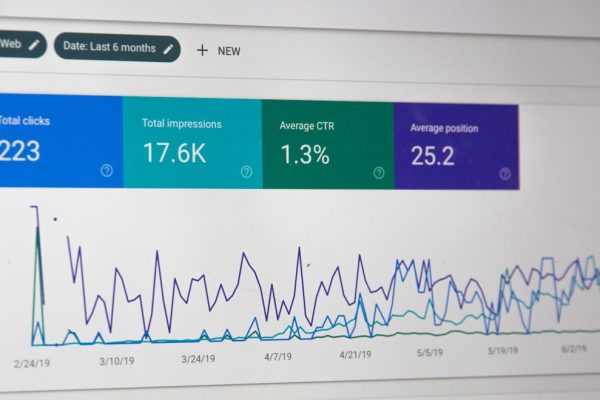

Integrate NLP Analysis Into Your Workflow

Google’s Cloud Natural Language API is publicly available and can analyse your content for entity recognition, sentiment, syntax, and content classification. Running your draft content through entity analysis reveals which entities Google identifies in your text and their salience scores. If key entities you intend to cover are missing or have low salience, revise to strengthen their presence.

This analytical step bridges the gap between what you think your content communicates and what NLP models actually detect. It is particularly valuable for digital marketing content where topical coverage and entity relevance directly impact rankings.

Technical NLP Optimisation Techniques

Beyond content quality, several technical factors influence how effectively NLP models process your pages.

HTML Semantics and Accessibility

Google’s NLP models process the text content of your pages, and HTML semantics influence how that text is interpreted. Proper use of heading tags (H1 for the page title, H2 for main sections, H3 for subsections) creates a machine-readable content hierarchy. Paragraph tags separate discrete ideas. List tags indicate enumerated or grouped items.

Avoid anti-patterns like using div tags for all content structure, placing text in images (which NLP models cannot process), hiding content behind JavaScript interactions that prevent crawling, or using heading tags for visual styling rather than semantic structure.

Content Rendering and JavaScript

Google renders JavaScript before processing content, but rendering adds latency and complexity. Content that requires JavaScript execution to become visible is processed later and potentially less completely than server-rendered HTML. For content-heavy pages where NLP processing is critical, ensure that the primary text content is available in the initial HTML response.

This is a key consideration for web design decisions. JavaScript frameworks that render content client-side can create NLP processing barriers. Server-side rendering or static generation ensures Google’s NLP models can access your content immediately.

Structured Data as NLP Supplement

While Google’s NLP models analyse your text content, structured data provides explicit entity declarations that supplement NLP analysis. Schema.org markup that identifies the about, author, publisher, and mentions entities on a page gives Google confirmed entity information that validates or enhances what NLP models extract from the text.

Think of structured data as providing NLP models with a “cheat sheet” — explicit declarations that reduce ambiguity and increase confidence in entity identification.

Language Declaration and Hreflang

Proper language declaration (the lang attribute on the HTML tag) helps NLP models apply the correct language model to your content. For multilingual Singapore sites, hreflang implementation ensures Google processes each language version with the appropriate NLP model and serves the correct version to users based on language preference.

Content Freshness Signals

NLP-driven search features like AI Overviews consider content freshness as a quality signal. Regularly updated content with current data, recent examples, and up-to-date information is more likely to be surfaced in AI-powered features. Include clear date signals (publication date, last updated date) in both visible content and structured data.

The Future of NLP in Search

Google’s NLP capabilities will continue to advance rapidly. Understanding the direction of travel helps you future-proof your content strategy.

AI Overviews and Generative Search

Google’s AI Overviews represent the next frontier of NLP in search. Rather than ranking ten blue links, Google’s AI generates a synthesised response drawing from multiple sources. Content that is cited in AI Overviews must be high quality, factually accurate, and topically authoritative — because AI models evaluate these qualities using NLP.

For SEO, this means the stakes for content quality are higher than ever. Content that is merely optimised for keywords will not be cited in AI Overviews. Content that demonstrates genuine expertise and provides unique value will be.

Conversational and Multi-Turn Search

Search is becoming increasingly conversational. Users ask follow-up questions, refine queries, and explore topics across multiple interactions. NLP models that maintain context across conversation turns need content that provides comprehensive, interconnected information.

Content architectures that support conversational search — topic hubs with clear internal linking, comprehensive FAQ sections, and content that anticipates follow-up questions — are well-positioned for this shift.

Personalised NLP Understanding

Google’s NLP models increasingly incorporate user context: search history, location, language preference, and interaction patterns. This means different users may see different results for the same query based on NLP’s assessment of their individual intent.

For SEO, this reinforces the importance of comprehensive content that addresses multiple facets of a topic. A single page that serves various intent variations is more robust against personalised ranking adjustments than a narrowly focused page that serves only one interpretation of a query.

NLP and SEO will continue to converge. The businesses that thrive will be those that view NLP not as a technical curiosity but as the foundation of how search works — and build their content strategies accordingly. Our Google Ads services can support your visibility while your NLP-optimised organic content strategy matures.

Frequently Asked Questions

What does NLP stand for in SEO?

NLP stands for natural language processing. In the SEO context, it refers to the AI and machine learning technologies Google uses to understand the meaning of both search queries and web content. NLP enables Google to go beyond keyword matching to understand intent, context, entities, relationships, and content quality at a linguistic level.

How does BERT affect my SEO strategy?

BERT affects SEO by enabling Google to understand content at a much deeper linguistic level. It means keyword stuffing and unnatural phrasing are counterproductive. Write naturally, use clear sentence structures, ensure every section provides genuine value, and address queries directly. BERT rewards content that is well-written and genuinely useful, not content that is mechanically optimised for keywords.

Can I optimise specifically for BERT or MUM?

You cannot target BERT or MUM with specific technical tweaks the way you might optimise for a traditional ranking factor. These are language understanding models, not ranking algorithms with adjustable signals. The best optimisation is to write comprehensive, clearly structured, high-quality content that genuinely serves user intent. This naturally aligns with what BERT and MUM are designed to identify and reward.

What is passage ranking and how does it affect content length?

Passage ranking is Google’s ability to evaluate and rank individual passages within a page, independently of the page’s overall topic or quality. It means a specific section of a long article can rank for a query that the overall page does not directly target. This rewards comprehensive long-form content with well-structured sections, as each section becomes an independent ranking opportunity.

Does Google’s NLP understand Singapore English?

Google’s NLP models are trained on vast amounts of text, including Singapore English. They handle standard Singapore English well, including common local terms and conventions. However, heavily colloquial text (dense Singlish, for example) may be processed less accurately. For SEO content, standard British English with Singapore-specific terminology strikes the best balance between NLP processing accuracy and local relevance.

How do NLP models evaluate content quality?

NLP models assess multiple quality dimensions: topical comprehensiveness (does the content cover expected entities and subtopics?), linguistic quality (is the writing clear and coherent?), information accuracy (does the content align with established facts?), and structural clarity (is the content logically organised?). These assessments feed into broader quality scoring that influences rankings.

Should I use Google’s NLP API to analyse my content?

Google’s Cloud Natural Language API is a useful tool for understanding how NLP models process your content. It reveals which entities are detected, their salience, the overall sentiment, and content category classification. While the public API may not perfectly mirror Google Search’s internal models, it provides valuable directional insights for content optimisation.

How does NLP relate to AI Overviews in search?

AI Overviews are powered by large language models that use NLP to synthesise information from multiple sources into a coherent response. Content that appears in AI Overviews must pass NLP quality assessments: it needs to be factually accurate, clearly written, topically authoritative, and genuinely informative. NLP is the technology that enables AI Overviews to identify and cite high-quality sources.

Does multilingual content help with NLP-based ranking?

For businesses serving multilingual markets like Singapore, properly implemented multilingual content can strengthen your topical authority. MUM processes information across 75 languages, meaning that your Chinese-language content about a topic reinforces your authority alongside your English-language content on the same topic. Proper hreflang implementation and language declaration ensure Google processes each version with the appropriate NLP model.

How will NLP developments affect SEO in the next few years?

NLP will continue to raise the quality bar for ranking content. AI-driven search features will cite fewer sources but expect higher quality from those cited. Conversational search will require content that addresses multi-step queries comprehensively. Entity understanding will become more nuanced, rewarding content from recognised authoritative entities. The core strategy remains unchanged: produce genuinely excellent content that serves real information needs. The difference is that NLP increasingly has the capability to distinguish excellent content from merely acceptable content.