Log File Analysis for SEO: Understand How Google Crawls Your Site

What Is Log File Analysis for SEO

Server log files record every request made to your web server — every page load, every resource fetch, every bot visit. For SEO, log file analysis means parsing these records to understand exactly how search engine crawlers interact with your site. Unlike Google Search Console, which provides Google’s curated summary of crawl activity, log files give you the raw, unfiltered truth about what is happening on your server.

Every time Googlebot requests a URL from your site, your server logs the request with details including the timestamp, requested URL, HTTP status code returned, response size, user agent string, and referring URL. This data, when aggregated and analysed, reveals patterns that no other SEO tool can provide.

Log file analysis answers questions that Search Console cannot: Which pages is Google crawling most frequently? Which pages has Google never requested? How quickly does Google discover new content? Are there URLs being crawled that should not exist? Is Googlebot encountering server errors you are not aware of?

For Singapore businesses running complex websites — multi-language e-commerce platforms, content-heavy publishing sites, or large service directories — log file analysis is one of the most underutilised techniques in the SEO toolkit. The data is already being generated by your server; you simply need to extract and interpret it.

Accessing and Preparing Server Log Files

Before analysis can begin, you need access to raw server log files and an understanding of their format.

Where to Find Log Files

Log file locations vary by server and hosting environment:

- Apache: Typically at /var/log/apache2/access.log or /var/log/httpd/access_log

- Nginx: Default location is /var/log/nginx/access.log

- IIS: Located in C:\inetpub\logs\LogFiles\ by directory

- Shared hosting: Usually accessible via cPanel under Raw Access Logs or Metrics > Raw Access

- Cloud platforms (AWS, GCP): Access logs may require enabling and configuring separately (CloudFront logs, Cloud CDN logs, Application Load Balancer logs)

- CDN-level logs: Cloudflare, Fastly, and Akamai provide their own access logs, which may differ from origin server logs

Log File Formats

The two most common formats are Common Log Format (CLF) and Combined Log Format. The Combined format is preferred for SEO analysis because it includes the referrer and user agent fields:

203.0.113.50 - - [15/Mar/2026:10:15:30 +0800] "GET /services/seo/ HTTP/1.1" 200 45230 "-" "Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)"This single line tells you: the IP address, timestamp (with Singapore timezone +0800), requested URL and method, HTTP status code (200), response size in bytes, referring URL (none in this case), and user agent (Googlebot).

Preparing Log Data for Analysis

Raw log files can be enormous — a busy site may generate gigabytes of log data per day. Preparation steps include:

- Filtering for bot traffic only: Remove human visitor requests to focus on search engine crawler behaviour. Filter by known bot user agent strings.

- Decompressing archived logs: Servers typically rotate and compress old log files. Decompress the time period you need for analysis.

- Standardising timestamps: If your server logs use UTC and you need Singapore time (SGT, UTC+8), convert timestamps for meaningful time-of-day analysis.

- Removing static resource requests: Filter out requests for images, CSS, JavaScript, and font files unless you are specifically analysing resource crawling.

Data Retention Considerations

Ensure your server retains log files for at least 90 days — ideally longer. Many hosting providers purge logs after 30 days, which limits your ability to identify long-term patterns. Configure log rotation to archive rather than delete old files. For comprehensive analysis, three to six months of data provides the most useful baseline.

Identifying Search Engine Bots in Log Data

Accurately identifying genuine search engine bots in log files is critical. Not every request claiming to be Googlebot is actually from Google.

User Agent Strings for Major Bots

The primary bots to identify include:

- Googlebot (desktop): Contains “Googlebot/2.1” in the user agent string

- Googlebot (mobile): Contains “Googlebot” along with “Mobile” in the user agent

- Googlebot-Image: Specifically crawls images

- Google-InspectionTool: Used by Search Console’s URL Inspection tool

- Bingbot: Contains “bingbot/2.0” in the user agent

- Yandex: Contains “YandexBot” in the user agent

- Baidu: Contains “Baiduspider” — relevant for Singapore businesses targeting China

Verifying Bot Authenticity

Fake bot traffic is common. Scrapers and malicious crawlers often use Googlebot’s user agent string to bypass access restrictions. To verify genuine Googlebot traffic, perform a reverse DNS lookup on the requesting IP address. Genuine Googlebot IPs resolve to hostnames ending in .googlebot.com or .google.com. A forward DNS lookup on that hostname should resolve back to the original IP.

# Reverse DNS lookup

host 66.249.66.1

# Should return: 1.66.249.66.in-addr.arpa domain name pointer crawl-66-249-66-1.googlebot.com

# Forward DNS verification

host crawl-66-249-66-1.googlebot.com

# Should return the original IP: 66.249.66.1Google also publishes a JSON file listing all Googlebot IP ranges, which you can use for bulk verification without individual DNS lookups.

Separating Bot Types

For analysis, separate Googlebot desktop from Googlebot mobile — Google primarily uses mobile-first indexing, so mobile Googlebot crawl patterns are more relevant for indexing analysis. Also separate Googlebot’s HTML crawler from its image, video, and AdsBot variants, as each has different crawl patterns and budget implications.

Key Metrics to Extract From Log Files

With clean, verified bot data, extract these core metrics for SEO analysis.

Crawl Frequency by URL

Count the number of Googlebot requests per URL over your analysis period. This reveals which pages Google prioritises. Compare this against your own priority assessment: are your most important pages (revenue-generating product pages, key service pages, fresh content) receiving proportional crawl attention?

A common finding is that Google spends disproportionate crawl budget on low-value pages — old blog posts, parameter-laden URLs, paginated archives — whilst high-priority pages receive minimal attention.

Crawl Frequency Over Time

Track total daily crawl volume over time to identify trends and anomalies. A sustained decline in crawl volume may indicate server performance issues, a manual action, or decreased site authority. Sudden spikes often correlate with sitemap submissions, major content additions, or structural changes.

HTTP Status Code Distribution

Aggregate the HTTP status codes Googlebot receives. A healthy profile is predominantly 200 responses with minimal 301s, 404s, and zero 500s. High rates of non-200 responses waste crawl budget and signal site quality issues to Google:

- 301/302 redirects: Each redirect consumes a crawl request. High redirect rates indicate stale internal links or unresolved URL migrations.

- 404 errors: Googlebot requesting non-existent pages means it is following outdated links — internal, external, or from sitemaps.

- 500 errors: Server errors during crawling directly reduce crawl rate limit. Even intermittent 500s can cause Google to throttle crawling.

- 304 Not Modified: Efficient — indicates Google is checking for updates and your server correctly reports no changes, saving bandwidth.

Response Time Distribution

If your logs include response time (not all formats do — you may need to configure custom logging), analyse the distribution. Identify pages with consistently slow response times. These pages are bottlenecks that reduce your overall crawl rate limit. Slow pages often share common causes: unoptimised database queries, missing caches, or heavy server-side processing.

Crawl Depth Analysis

By analysing which URL paths Googlebot accesses, you can determine how deep into your site structure Google crawls. If important content lives at depth four or five (four or five clicks from the homepage) but Googlebot rarely reaches beyond depth three, those deep pages are effectively invisible. This informs site architecture decisions and internal linking strategies.

Interpreting Crawl Behaviour Patterns

Raw metrics become valuable when you interpret them as patterns that reveal Google’s perception of your site.

Discovery vs Refresh Crawling

Classify Googlebot’s requests into two categories: visits to previously unknown URLs (discovery) and revisits to known URLs (refresh). If refresh crawling dominates, Google is spending most of its budget re-checking existing pages rather than finding new content. This is common on sites with strong internal linking to existing pages but poor mechanisms for surfacing new content.

Crawl Timing Patterns

Googlebot crawls at different intensities throughout the day. Identifying peak crawl times helps with server capacity planning. Some sites see higher crawl activity during low-traffic hours (when server load is lighter and responses are faster). Others see crawl spikes shortly after content publication, indicating Google has learned your publishing schedule.

For Singapore-based sites, note whether Googlebot crawls primarily during US business hours (when Google’s infrastructure is at peak capacity) or distributes crawling evenly. Time-zone patterns can influence when you publish new content for fastest indexing.

Crawl Traps and Infinite Loops

Log file analysis is the most reliable way to identify crawl traps — URL patterns that generate infinite crawlable URLs. Common traps include calendar widgets that generate URLs for every date combination, sorting and filtering parameters that combine endlessly, and relative URLs that create infinitely deep paths (e.g., /parent/parent/parent/parent/page).

In log data, crawl traps appear as a large number of requests to URLs sharing a common pattern, often with incrementally deeper paths or longer query strings. Identifying and blocking these traps can recover significant crawl budget.

Section-Level Crawl Distribution

Group URLs by site section (blog, products, categories, service pages) and compare crawl frequency across sections. This reveals whether Google’s crawl priority aligns with your business priority. If your product pages generate 80% of revenue but receive only 20% of crawl attention, restructuring internal links and navigation can rebalance the distribution. A coherent digital marketing strategy ensures crawl resources are directed towards the pages that drive business results.

Finding and Fixing Wasted Crawl Budget

The most immediate value of log file analysis is identifying wasted crawl budget and implementing fixes.

Pages Crawled but Not in Sitemap

Cross-reference the URLs Googlebot crawls against your XML sitemap. URLs that Googlebot requests but that are not in your sitemap may be orphan pages, deprecated pages, or URLs you did not intend to be crawlable. Each of these wastes crawl budget and may indicate structural problems.

Sitemap Pages Not Crawled

Conversely, identify sitemap URLs that Googlebot has not requested during your analysis period. These are pages you consider important enough to include in your sitemap but that Google is not prioritising. Causes include poor internal linking (Google does not discover them organically), low perceived authority, or being overshadowed by higher-priority pages consuming the available budget.

High-Volume Low-Value Crawls

Sort URLs by crawl frequency and examine the most-crawled pages. Are they your most important pages? Often, the most-crawled URLs are pagination pages, tag archives, or parameter variations — pages with minimal unique value. Compare the top 100 most-crawled URLs against your top 100 most important pages (by revenue, traffic, or strategic value). Misalignment indicates optimisation opportunities.

Implementing Fixes

Based on log file findings, common fixes include:

- Robots.txt updates: Block URL patterns that generate high crawl volume with low value

- Noindex directives: Apply to pages that serve users but should not consume crawl budget long-term

- Internal link restructuring: Add links to under-crawled important pages, reduce links to over-crawled low-value pages

- Server performance improvements: Address slow-responding pages identified in log timing data

- Redirect chain resolution: Replace multi-hop redirects with direct links to final destinations

- 404 cleanup: Fix or redirect URLs that consistently return 404 to Googlebot

Tools and Methods for Log File Analysis

The appropriate tool depends on your log volume, technical capability, and analysis requirements.

Command-Line Tools

For quick, ad-hoc analysis, command-line tools are fast and flexible. Using grep, awk, and sort on Unix-based systems, you can extract bot-specific data, count request frequencies, and identify patterns. For example:

# Count Googlebot requests per URL, sorted by frequency

grep "Googlebot" access.log | awk '{print $7}' | sort | uniq -c | sort -rn | head -50

# Count status codes for Googlebot requests

grep "Googlebot" access.log | awk '{print $9}' | sort | uniq -c | sort -rn

# Find 500 errors served to Googlebot

grep "Googlebot" access.log | awk '$9 == 500 {print $7}' | sort | uniq -c | sort -rnCommand-line analysis is ideal for answering specific questions quickly but becomes unwieldy for comprehensive analysis across large datasets.

Spreadsheet Analysis

For moderate log volumes (up to a few hundred thousand rows), importing filtered log data into Google Sheets or Excel allows pivot table analysis, charting, and easier exploration. Pre-filter logs to bot traffic only before importing to keep file sizes manageable.

Dedicated SEO Log Analysis Tools

Specialised tools designed for SEO log analysis include Screaming Frog Log File Analyser, JetOctopus, Oncrawl, and Botify. These tools parse log files automatically, identify bots, generate visualisations, and cross-reference log data with crawl data and Google Search Console information. For large sites, these tools save significant time compared to manual analysis.

ELK Stack and Custom Pipelines

For enterprise-scale analysis, the ELK stack (Elasticsearch, Logstash, Kibana) or similar log aggregation platforms provide real-time analysis, long-term storage, and powerful visualisation. Logstash parses and normalises log data, Elasticsearch indexes it for fast querying, and Kibana provides dashboards and visualisations. This approach requires infrastructure investment but enables continuous monitoring rather than periodic snapshots.

Python and Data Analysis Libraries

For custom analysis, Python with pandas provides the flexibility to answer any question your data can support. Parsing log files into a pandas DataFrame allows grouping, filtering, time-series analysis, and integration with other data sources (Search Console API, Analytics data, sitemap lists).

Turning Log Data Into Actionable SEO Improvements

Log file analysis only delivers value when insights translate into actions. Here are the most impactful optimisation workflows driven by log data.

Orphan Page Discovery and Resolution

Compare log file URLs against a full site crawl from Screaming Frog or Sitebulb. Pages that appear in logs (Googlebot found them somehow) but not in your internal crawl are orphan pages — accessible to Google but not linked from your site structure. Decide whether to integrate them into your site structure with internal links or remove them entirely.

Content Freshness Optimisation

Identify pages with high crawl frequency but no content changes. If Google is re-crawling the same unchanged content repeatedly, implement proper Last-Modified headers and ETag support so Google receives 304 Not Modified responses, saving crawl budget for pages that actually change.

Indexing Pipeline Monitoring

After publishing new content, track how quickly Googlebot discovers and crawls the new URLs. If new content takes days to receive its first crawl, investigate your internal linking structure, sitemap update frequency, and whether you are using the Indexing API or submitting URLs via Search Console. A well-optimised content marketing operation ensures new content reaches Google’s index rapidly.

Ongoing Monitoring and Alerting

Set up automated monitoring for key crawl health metrics: daily crawl volume, 500 error rate, average response time, and new URL discovery rate. Alert on significant deviations — a 50% drop in daily crawl volume or a spike in 500 errors warrants immediate investigation. Integrate log monitoring into your broader performance marketing dashboard to correlate crawl health with traffic and conversion metrics.

Frequently Asked Questions

What server log format is best for SEO analysis?

The Combined Log Format is the minimum requirement as it includes the user agent field needed to identify search engine bots. If your server supports custom log formats, add response time and request size fields for more comprehensive analysis. Avoid formats that omit the user agent or URL query string.

How much log data do I need for meaningful SEO analysis?

A minimum of 30 days provides a baseline, but 90 days is recommended to capture weekly patterns, monthly content cycles, and seasonal variations. For sites with infrequent Googlebot visits, longer periods ensure you have enough data points for statistical significance. Sites with daily crawl volumes under 100 pages should analyse at least six months of data.

Can I use CDN logs instead of origin server logs?

CDN logs capture requests at the edge, which may differ from origin server logs if the CDN serves cached content. For SEO analysis, CDN logs are acceptable and often preferable because they show what Googlebot actually receives, including cached responses. However, ensure your CDN logs include all necessary fields (user agent, status code, full URL with query parameters).

How do I verify that a request is genuinely from Googlebot?

Perform a reverse DNS lookup on the IP address. Genuine Googlebot IPs resolve to hostnames ending in .googlebot.com or .google.com. Then perform a forward DNS lookup on that hostname to verify it resolves back to the original IP. Google also publishes a JSON file of all Googlebot IP ranges for bulk verification.

What does a high rate of 304 responses to Googlebot mean?

A 304 Not Modified response means Googlebot checked a page and your server correctly reported that content has not changed since the last crawl. This is efficient — it saves bandwidth and processing for both your server and Google. A high 304 rate on frequently crawled pages is positive. A high 304 rate on pages you have recently updated suggests caching headers or ETags are not updating correctly.

Why does Googlebot crawl pages I have blocked in robots.txt?

If you see requests for robots.txt-blocked URLs in your logs, check two things: first, whether the requests predate your robots.txt update (Googlebot caches robots.txt and checks periodically); second, whether the requests are from a different Googlebot variant (Googlebot-Image, AdsBot) that may have different robots.txt rules. Also verify the request is genuinely from Google and not a fake bot using the Googlebot user agent.

How often should I perform log file analysis?

For large or complex sites, continuous automated monitoring is ideal. For smaller sites, a quarterly analysis is sufficient to identify trends and issues. Additionally, perform analysis after any major site changes — migrations, redesigns, large content additions, or structural changes — to verify Google’s response.

Can log file analysis help with indexing issues?

Absolutely. Log files reveal whether Google is actually crawling pages that are not indexed. If a page is crawled regularly but not indexed, the issue is content quality or duplicate content. If a page is never crawled, the issue is discoverability — internal linking, sitemap inclusion, or crawl budget allocation. This distinction guides the correct remediation strategy.

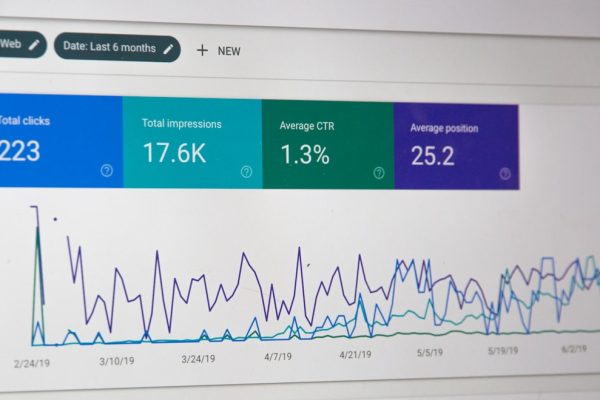

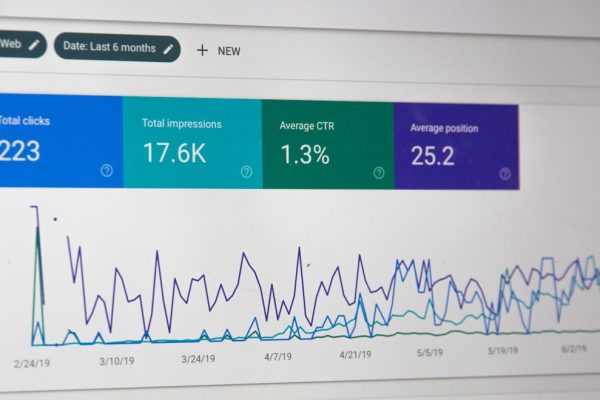

What is the difference between log file analysis and Google Search Console crawl stats?

Google Search Console crawl stats provide Google’s summarised view of crawl activity — aggregated daily totals, response time averages, and high-level breakdowns. Server logs provide raw, request-level detail: every individual URL request with exact timestamps, status codes, and response sizes. Log files are more granular and include requests from all bots, not just Googlebot. Search Console is easier to access but less detailed.

Do I need technical skills to perform log file analysis?

Basic analysis using dedicated tools (Screaming Frog Log Analyser, JetOctopus) requires minimal technical skill — upload your log file and the tool handles parsing and visualisation. Advanced analysis using command-line tools, Python, or the ELK stack requires programming or system administration knowledge. For most SEO practitioners, a combination of dedicated tools for regular analysis and occasional command-line work for specific investigations is effective.