JavaScript SEO: Make JS-Heavy Sites Visible to Search Engines

How Google Renders JavaScript

Google’s rendering pipeline is fundamentally different from how a user’s browser loads a webpage. Understanding this pipeline is the foundation of JavaScript SEO. When Googlebot encounters a URL, it first fetches the HTML source — the raw response from your server. If the HTML contains JavaScript that modifies the DOM, Google must render the page to see the final content.

Google uses a headless Chromium-based renderer called the Web Rendering Service (WRS). As of 2026, this renderer runs an evergreen version of Chromium, meaning it supports modern JavaScript features, ES modules, async/await, and most Web APIs. The days of worrying about ES5 compatibility for Googlebot are over.

However, the rendering process introduces a critical delay. Google’s crawling and rendering are decoupled — pages are crawled first, then queued for rendering. This queue can introduce delays of seconds to days depending on Google’s resource allocation and your site’s crawl priority. During this gap, Google only sees the initial HTML source. If your initial HTML is empty (a typical client-side rendered SPA shell), Google sees nothing until rendering completes.

The Two Waves of Indexing

This decoupled process creates what practitioners call “two waves of indexing.” In the first wave, Google indexes content available in the raw HTML. In the second wave, after rendering, Google indexes the JavaScript-generated content. For time-sensitive content — news articles, product launches, event pages — the rendering delay means critical content may not appear in search results when it matters most.

Resource Loading and Timeouts

Google’s renderer has practical limits. It will wait approximately five seconds for content to appear after the initial page load. JavaScript that relies on user interaction (click events, scroll triggers, IntersectionObserver for lazy loading) will not execute during rendering. Content behind these interaction gates is invisible to Google.

Additionally, if your JavaScript depends on third-party resources that are blocked by robots.txt or are slow to load, rendering may fail or produce incomplete results. Google must be able to access all JavaScript files, CSS files, and API endpoints that contribute to your page content.

Rendering Strategies: CSR, SSR, SSG and ISR

The choice of rendering strategy is the most consequential technical decision for JavaScript SEO. Each approach has distinct implications for how search engines perceive your content.

Client-Side Rendering (CSR)

In pure client-side rendering, the server sends a minimal HTML shell — typically an empty div element and a JavaScript bundle. The browser (or Googlebot’s renderer) executes the JavaScript, fetches data from APIs, and builds the page content entirely on the client.

CSR is the default in single-page application frameworks (React with Create React App, Vue CLI, Angular CLI). For SEO, CSR is the highest-risk approach because it relies entirely on Google’s rendering pipeline. If rendering fails, times out, or is delayed, no content is visible. For a marketing or business website, pure CSR is rarely justifiable when server-rendered alternatives exist.

Server-Side Rendering (SSR)

SSR generates the complete HTML on the server for each request. When Googlebot fetches the page, it receives fully formed HTML with all content present — no JavaScript execution required for content visibility. The JavaScript then “hydrates” the page on the client, adding interactivity.

SSR is the gold standard for JavaScript SEO. Frameworks like Next.js (React), Nuxt.js (Vue), and Angular Universal provide SSR capabilities. The trade-off is server load: every request requires server-side JavaScript execution, which is more resource-intensive than serving static files. For Singapore businesses on shared hosting or limited server resources, this may require infrastructure upgrades.

Static Site Generation (SSG)

SSG pre-renders all pages at build time, producing static HTML files. These files are served directly without server-side processing, combining the SEO benefits of SSR with the performance of static hosting. Next.js, Gatsby, Nuxt, and Astro all support SSG.

The limitation is that content updates require a rebuild and redeployment. For sites with frequently changing content (real-time pricing, user-generated content), pure SSG may not be practical. However, for marketing sites, blogs, and documentation, SSG is often the ideal approach.

Incremental Static Regeneration (ISR)

ISR, popularised by Next.js, combines SSG with on-demand regeneration. Pages are statically generated at build time but can be regenerated in the background when a specified time interval elapses. This provides the SEO and performance benefits of static files whilst allowing content to update without full rebuilds.

For e-commerce sites with thousands of product pages, ISR allows the most popular pages to be pre-rendered whilst less-visited pages are rendered on demand and then cached. This is particularly effective for Singapore retailers with large catalogues. An experienced web design partner can help determine which rendering strategy suits your specific requirements.

Dynamic Rendering as an Interim Solution

Dynamic rendering serves pre-rendered HTML to search engine bots whilst serving the standard client-side rendered version to users. It acts as a middleware layer that detects the user agent and routes requests accordingly.

How Dynamic Rendering Works

A dynamic rendering solution sits between the server and the client. When a request arrives, the middleware checks the user agent string. If it identifies a search engine bot (Googlebot, Bingbot, etc.), it routes the request to a headless browser that renders the page and returns the resulting HTML. Human users receive the standard CSR application.

Tools like Rendertron (open-source by Google) and Prerender.io provide dynamic rendering capabilities. Implementation typically involves adding middleware to your web server or using a CDN-level solution.

Google’s Position on Dynamic Rendering

Google has described dynamic rendering as a “workaround” rather than a long-term solution. It is not considered cloaking because the rendered content should be identical to what users see — only the delivery mechanism differs. However, Google has signalled that as its rendering capabilities improve, the need for dynamic rendering diminishes.

Dynamic rendering remains valuable in specific scenarios: legacy applications where migrating to SSR is prohibitively expensive, large SPAs with complex JavaScript that occasionally causes rendering failures, and as a temporary measure during migration to a server-rendered architecture.

Implementation Considerations

When implementing dynamic rendering, ensure the pre-rendered HTML matches the client-rendered output exactly. Discrepancies can be flagged as cloaking. Monitor rendering freshness — if your pre-rendered cache serves stale content to Googlebot, indexed content will lag behind what users see. Set appropriate cache expiration based on your content update frequency.

Hydration and Partial Hydration

Hydration is the process of attaching JavaScript event listeners and state to server-rendered HTML, making static content interactive. Understanding hydration patterns is important because they affect both SEO and user experience metrics that influence rankings.

Full Hydration

Traditional SSR frameworks use full hydration: the server renders HTML, the browser receives it, then the entire JavaScript bundle loads and “hydrates” the page by attaching event handlers to every interactive element. During hydration, the page is visible but not interactive — a state that can negatively impact Interaction to Next Paint (INP) metrics.

The SEO concern with full hydration is the JavaScript bundle size. Large bundles delay hydration, increasing Time to Interactive and potentially affecting Core Web Vitals scores. For content-heavy pages where interactivity is limited, full hydration loads unnecessary JavaScript.

Partial Hydration

Partial hydration (also called “islands architecture”) only hydrates interactive components, leaving static content as plain HTML. Frameworks like Astro implement this natively — static content ships as zero-JavaScript HTML, and only interactive “islands” (search bars, forms, interactive widgets) receive JavaScript.

For SEO, partial hydration is excellent. It minimises JavaScript payload, reduces time to interactive, and ensures content is available in the initial HTML. For marketing sites and content-heavy pages, partial hydration provides the best balance of interactivity and SEO performance.

Progressive Hydration and Streaming SSR

Progressive hydration defers hydration of below-the-fold components until they are needed (e.g., when scrolled into view). React 18’s streaming SSR sends HTML in chunks as components render on the server, reducing Time to First Byte and allowing the browser to begin parsing HTML before the full page is complete.

These techniques improve user experience metrics that Google measures, particularly Largest Contentful Paint (LCP) and INP. While Google does not directly factor hydration strategy into rankings, the resulting performance improvements contribute to better Core Web Vitals scores.

Common JavaScript SEO Issues

Certain JavaScript patterns consistently cause SEO problems. Recognising and avoiding these patterns prevents indexing failures.

Client-Side Routing Without Server-Side Support

SPA frameworks use client-side routing — the URL changes in the browser address bar without a server request. This creates a critical problem: if a user (or Googlebot) navigates directly to a deep URL, the server must return the correct page. Without server-side route handling, deep URLs return 404 errors or the generic SPA shell.

Ensure your server is configured to handle all routes defined in your SPA by returning the index.html for any unmatched route (for CSR) or by implementing proper SSR for each route.

Lazy-Loaded Content Below the Fold

Lazy loading images and below-the-fold content is a performance best practice, but implementation matters for SEO. If lazy loading depends on scroll events or IntersectionObserver without a fallback, Googlebot — which renders at a fixed viewport without scrolling — will not trigger the load.

Use native lazy loading (loading=”lazy” attribute) for images, which Googlebot handles correctly. For lazy-loaded text content, ensure there is a noscript fallback or that the initial HTML includes the content even if it is visually hidden until scroll.

JavaScript Redirects

Redirects implemented via JavaScript (window.location.href) rather than server-side 301/302 responses are problematic. Googlebot may or may not follow JavaScript redirects, and they do not pass full link equity. Always implement redirects at the server level.

AJAX-Loaded Critical Content

Content loaded via AJAX calls after initial page render is vulnerable to rendering failures. If the API endpoint is slow, returns errors, or is blocked, the content never appears. For critical SEO content (product descriptions, article text, category content), ensure it is present in the initial server response rather than loaded asynchronously.

Blocked Resources

If robots.txt blocks JavaScript or CSS files that Googlebot needs to render your page, the rendering will fail or produce incorrect results. Audit your robots.txt to ensure all resources required for rendering are accessible. Google Search Console’s URL Inspection tool will flag blocked resources.

A thorough digital marketing audit should include JavaScript rendering validation as a standard component, particularly for sites built on modern JavaScript frameworks.

Debugging JavaScript Rendering Problems

When JavaScript content is not appearing in Google’s index, systematic debugging is essential. Several tools and techniques help identify the root cause.

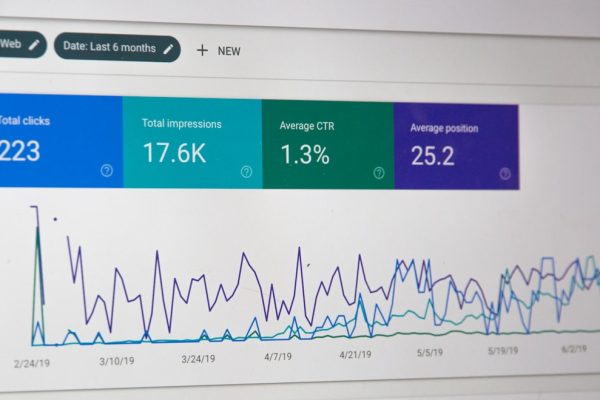

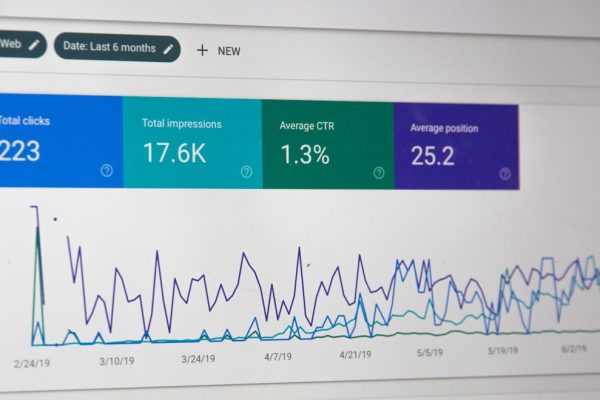

Google Search Console URL Inspection

The URL Inspection tool in Search Console is the most authoritative debugging tool. It shows both the raw HTML (what Google sees before rendering) and the rendered HTML (what Google sees after JavaScript execution). Compare the two: if critical content appears only in the rendered version, you have a rendering dependency. If it does not appear in either, the issue is in your code rather than Google’s rendering.

The “Live Test” option renders the page in real time, whilst the “View Crawled Page” shows what Google actually indexed. Discrepancies between these indicate a rendering failure during the actual crawl.

Google’s Rich Results Test and Mobile-Friendly Test

Both tools render pages and show the resulting HTML and screenshots. They provide a quick way to verify rendering without waiting for a live crawl. However, these tools may render differently from the actual Googlebot crawl — they run in real time with potentially different resource availability.

Chrome DevTools for Bot Simulation

To simulate Googlebot’s view, use Chrome DevTools with specific settings: disable JavaScript to see the raw HTML, use the Performance panel to identify rendering bottlenecks, and check the Network panel for blocked or failed resource loads. The “Rendering” drawer in DevTools allows you to emulate various conditions that affect rendering.

Server Log Analysis for Resource Requests

Check your server logs to verify that Googlebot is requesting all necessary JavaScript and CSS files. If key resources are not being requested, Google may be relying on a cached version or encountering an error before reaching those resources. Cross-reference Googlebot’s resource requests against the resources your page requires for complete rendering.

Rendering Comparison Tools

Tools like Screaming Frog’s JavaScript rendering mode and Sitebulb’s rendering comparison can crawl your entire site and compare raw HTML against rendered HTML at scale. This identifies pages where JavaScript rendering adds significant content — pages that are at risk if rendering fails.

Framework-Specific SEO Guidance

Each JavaScript framework has specific SEO considerations and recommended approaches.

React and Next.js

Next.js is the recommended React framework for SEO-critical sites. It supports SSR, SSG, and ISR out of the box. Key configuration for SEO includes setting up the Head component for meta tags on each page, configuring getStaticProps or getServerSideProps for data fetching, implementing proper 404 and error pages, and using next/image for optimised image handling with appropriate alt attributes.

For existing React SPAs not on Next.js, consider migrating to Next.js incrementally using the app router, or implementing dynamic rendering as a bridge solution.

Vue and Nuxt.js

Nuxt.js provides Vue’s SSR capabilities with SEO-friendly defaults. Nuxt 3 uses Nitro as its server engine, providing excellent performance for server-rendered pages. Configure the useHead composable for meta tags, use useFetch for data fetching that works during SSR, and implement the nuxt.config.ts SEO module for site-wide meta configuration.

Angular and Angular Universal

Angular Universal adds SSR to Angular applications. Angular’s approach is more complex than React or Vue SSR due to the framework’s dependency injection system. Ensure TransferState is used to prevent duplicate API calls during hydration, and implement prerendering for static pages using Angular’s prerender builder.

Astro for Content Sites

For content-heavy sites (blogs, marketing sites, documentation), Astro is an excellent choice. It generates static HTML by default with zero JavaScript, adding JavaScript only for interactive components. This “islands architecture” produces the fastest possible pages with perfect SEO characteristics. For Singapore content marketing programmes that prioritise organic traffic, Astro provides a strong technical foundation.

SvelteKit

SvelteKit provides SSR and SSG with minimal configuration. Svelte’s compile-time approach produces smaller JavaScript bundles than React or Vue, improving hydration speed and Core Web Vitals. The +page.server.js pattern cleanly separates server-side data loading from client-side rendering.

Frequently Asked Questions

Can Google render JavaScript properly in 2026?

Yes, Google’s Web Rendering Service runs an evergreen version of Chromium and can render most modern JavaScript correctly. However, rendering is resource-intensive and introduces delays. Content that relies on JavaScript rendering is indexed later than content available in raw HTML, and rendering can fail for pages with complex dependencies, blocked resources, or timeout issues.

Is server-side rendering mandatory for SEO?

SSR is not strictly mandatory — Google can render client-side JavaScript. However, SSR is strongly recommended for SEO-critical pages because it eliminates rendering delays, rendering failures, and the two-wave indexing problem. For pages where organic search traffic is important, SSR provides significantly more reliable indexing.

Does dynamic rendering count as cloaking?

Google has explicitly stated that dynamic rendering is not cloaking, provided the pre-rendered content matches what users see. The intent of dynamic rendering is to serve the same content more efficiently, not to deceive. However, if you serve different content to bots than to users, that would be cloaking regardless of the technical mechanism.

How do I check if Googlebot can render my JavaScript pages?

Use the URL Inspection tool in Google Search Console to see both the raw HTML and rendered HTML of any page. The “Live Test” option renders the page in real time. Compare the rendered output against what users see in a browser. Rich Results Test and Mobile-Friendly Test also show rendered pages.

What JavaScript features does Googlebot not support?

Googlebot’s Chromium-based renderer supports nearly all standard JavaScript features. It does not support features requiring user interaction (click, scroll, hover events), some Web APIs like WebGL and WebRTC, service workers during rendering, and features requiring persistent storage (localStorage, IndexedDB values from previous sessions). Content behind interaction-based triggers will not be rendered.

Does JavaScript SEO affect Core Web Vitals?

The rendering strategy directly impacts Core Web Vitals. Client-side rendered pages typically have worse LCP (content appears later), worse INP (heavy JavaScript bundles delay interactivity), and potentially worse CLS (content shifts as JavaScript loads and renders). SSR and SSG generally produce better Core Web Vitals scores because content is available immediately and JavaScript bundles are smaller or unnecessary.

Should I use a JavaScript framework for my marketing website?

For a primarily content-driven marketing website, a JavaScript SPA framework (React, Vue, Angular) adds complexity without proportional benefit. Static site generators (Astro, Hugo, Eleventy) or server-rendered frameworks (Next.js, Nuxt.js) with static export are better choices. A well-built WordPress or traditional CMS site with minimal JavaScript often provides the best SEO performance for marketing content.

How does lazy loading affect JavaScript SEO?

Lazy loading images with the native loading=”lazy” attribute is SEO-safe — Googlebot handles it correctly. JavaScript-based lazy loading using IntersectionObserver works if elements are in the initial viewport during rendering. Content lazy-loaded based on scroll events will not be triggered by Googlebot, which does not scroll. Ensure all critical content is available without scroll-triggered loading.

What is the rendering budget and how does it differ from crawl budget?

Crawl budget refers to how many pages Google will request from your server. Rendering budget is an informal term for Google’s capacity to render JavaScript pages. Pages requiring rendering are queued after crawling, and Google allocates rendering resources based on page importance and demand. Sites with thousands of JavaScript-dependent pages may experience rendering backlogs separate from crawl budget constraints.

How can I migrate from a CSR SPA to SSR without breaking SEO?

Migrate incrementally: start by implementing SSR for the highest-traffic pages, maintain existing URLs (no URL changes during migration), use Google Search Console to monitor indexing throughout, implement proper canonical tags on all pages, and validate rendering of each migrated page before proceeding. Frameworks like Next.js support incremental adoption, allowing CSR and SSR pages to coexist during migration.