Indexing Issues: Diagnose and Fix Pages Google Will Not Index

Table of Contents

How Google’s Indexing Pipeline Works

Before you can resolve indexing issues SEO problems, you need to understand the three-stage pipeline every URL passes through before appearing in search results. Failure at any stage keeps the page invisible, but the cause and solution differ depending on where the process breaks down.

Discovery is the first stage: Google finds URLs through links from already-indexed pages, XML sitemaps, URL Inspection submissions and redirect chains. If Google does not know a URL exists, it cannot crawl or index it. Crawling comes next: Googlebot fetches the URL’s content, HTML, rendered JavaScript and HTTP response headers. Not every discovered URL gets crawled promptly; low-priority URLs may sit in the queue for weeks. Indexing is the final gate: Google processes the content and decides whether to include it in the index based on quality, uniqueness, canonical status and relevance. A page can pass crawling but fail indexing if Google determines it does not add sufficient value.

Since the Helpful Content updates, this quality gate has become increasingly strict. Understanding which stage your page is failing at is the essential first step. Google Search Console’s index coverage report provides this information, making it the starting point for any indexing diagnosis. A professional SEO audit typically begins with this exact analysis.

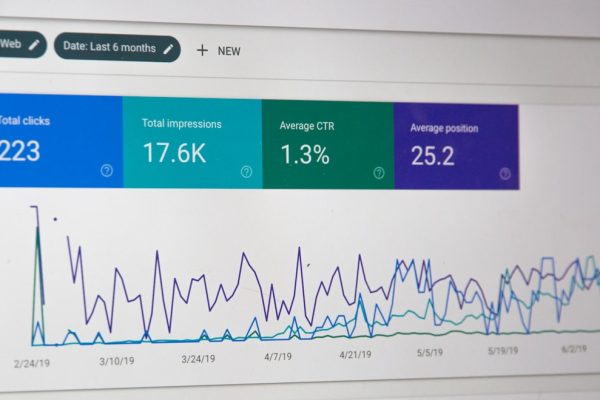

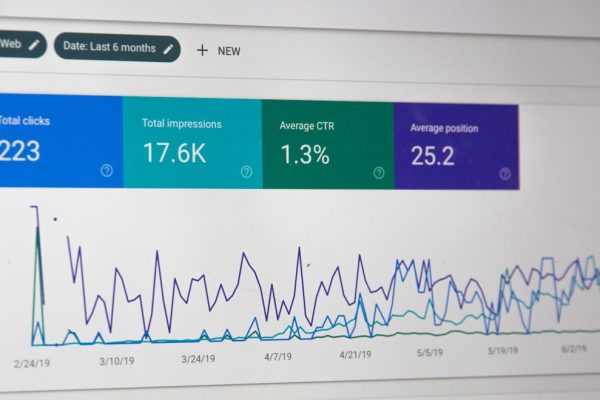

Reading the Index Coverage Report

The index coverage report categorises all URLs Google knows about into four statuses: Valid (indexed), Valid with Warnings, Excluded and Error. Not all excluded pages are problems. Google legitimately excludes pages you have marked as noindex, duplicates where Google chose a canonical, and URLs blocked by robots.txt. The key is distinguishing intentionally excluded pages from pages that should be indexed but are not.

“Crawled — currently not indexed” and “Discovered — currently not indexed” are the two status codes most frequently indicating genuine problems. “Alternate page with proper canonical tag” and “Duplicate without user-selected canonical” are common for sites with duplicate content. “Excluded by noindex tag” should be reviewed to ensure no important pages are accidentally blocked.

Track trends over time rather than fixating on raw numbers. A gradual increase in “crawled — currently not indexed” over several months suggests a growing quality issue. A sudden spike after a site update indicates a technical regression. Review these trends weekly as part of ongoing SEO monitoring.

Fixing Crawled but Not Indexed Pages

This status means Google successfully fetched the page but decided it was not worth including in the index. This is a quality-based decision and one of the most frustrating indexing issues SEO professionals encounter because the cause is qualitative rather than technical.

Thin content is the primary cause: pages with very little unique content, boilerplate text or near-duplicate material. Product pages with only a title and price, category pages consisting of link lists, and blog posts under 300 words are typical candidates. Low-quality content that does not match search intent is another common trigger. Even substantial word counts fail if the content does not answer any query better than existing indexed pages.

For each affected page, ask: Does this page offer unique content unavailable on my other pages? Does it satisfy a specific search intent? Is there an existing indexed page covering the same topic? Remediation strategies include substantially expanding thin content with genuine value, consolidating duplicate pages into comprehensive resources with canonical tags, and adding noindex to pages that simply do not warrant indexation such as utility pages and internal search results. For content quality issues, rewrite with genuine depth, original analysis and actionable advice. A professional content marketing approach focuses on creating content that deserves indexation.

Resolving Discovered but Not Indexed Status

This status indicates Google knows the URL exists but has not yet crawled it. The URL sits in Googlebot’s crawl queue, making this primarily a crawl budget or prioritisation issue rather than a content quality problem.

Large sites with thousands of low-value pages consume crawl budget on content Google is unlikely to index, leaving higher-value pages stuck in the queue. Faceted navigation generating millions of URL variations is a classic culprit. Poor site architecture where important pages require many clicks from the homepage signals low importance to Google. Server performance issues during peak traffic periods, common on shared hosting in Singapore, can trigger this status at scale.

Improve internal linking to affected pages from main navigation, sidebars or high-authority content. Add affected URLs to your XML sitemap and submit through Search Console. Reduce crawl waste by blocking low-value URL patterns with robots.txt or adding noindex to pages not needing indexation. Address server performance by improving response times below 500 milliseconds consistently. Every low-value page Google crawls takes budget away from your important pages.

Noindex, Robots.txt and Canonical Errors

Sometimes the indexing failure is straightforward: you are explicitly telling Google not to index pages, often unintentionally. The most common scenario is a staging noindex tag that was not removed before launch. Check every page template and dynamically generated page for noindex directives in both HTML meta tags and X-Robots-Tag HTTP headers.

Robots.txt blocking prevents crawling entirely, which is different from noindex. If robots.txt blocks a page with a noindex tag, Google never sees the noindex directive and may continue showing the URL in results with a “No information is available” snippet. Use noindex for removal from search results, not robots.txt.

Canonical misconfigurations are another common cause. If Page A’s canonical tag points to Page B, Google indexes B and excludes A. This is correct when intentional but problematic when caused by CMS bugs, template errors or incorrect URL resolution. Audit canonical tags to ensure every page either self-references or intentionally defers to another page. Tools like Screaming Frog flag pages where the canonical differs from the URL. Your web development team should verify canonical configuration after every major site update.

Cleaning Up Index Bloat

Index bloat is the opposite problem: too many pages indexed, including pages that should not be. While having more indexed pages might seem beneficial, bloat dilutes your site’s quality signals, wastes crawl budget and can suppress rankings across your entire domain.

Compare the number of indexed pages via a site: search or the coverage report against the pages you intend to have indexed. Common bloat sources include indexed parameter URLs, internal search results, pagination pages, thin tag and archive pages, and utility pages like login screens. Google’s Helpful Content system evaluates quality at the site level, meaning thousands of low-quality indexed pages can suppress rankings for your genuinely valuable content.

Systematically noindex low-value indexed pages. For URL patterns that should not be crawled at all, add robots.txt disallow rules, but remember existing indexed URLs also need noindex tags for removal since robots.txt alone will not de-index them. For Singapore e-commerce sites, faceted navigation is often the largest bloat source. Block faceted URLs from indexing while keeping core category pages accessible.

Crawl Budget Optimisation Strategies

Crawl budget optimisation ensures Google spends its limited crawling resources on your most important pages. For small sites under a few thousand pages, budget is rarely a concern. For larger sites, it becomes critical.

Audit server logs to see which URLs Googlebot actually crawls. You may discover significant crawl budget going to low-value URL patterns: parameter variations, session IDs, sort orders and other waste. Block these patterns in robots.txt. Flatten site architecture so important pages are reachable within three clicks from the homepage. Use internal links strategically to boost perceived importance of key pages.

Ensure your XML sitemap only contains URLs you want indexed that return 200 status codes. Remove redirecting, noindexed and error URLs. Server response time directly affects crawl volume: reduce time-to-first-byte below 200 milliseconds where possible using caching, CDN distribution and efficient rendering. For Singapore-based sites, ensure hosting provides low-latency responses for Googlebot’s primarily US-based crawlers. Combining these techniques with a comprehensive digital marketing audit provides the complete picture of your site’s indexing health.

Frequently Asked Questions

How long does it take for Google to index a new page?

High-authority sites may see new pages indexed within hours. Newer or lower-authority sites may wait days to weeks. Submitting via URL Inspection can accelerate the process, but Google processes requests based on its own prioritisation with daily limits.

Should I use Request Indexing in Search Console?

Use it for specific high-priority pages needing fast indexation: product launches, time-sensitive content or pages stuck in the queue. Do not use it as a substitute for proper indexing signals. If you manually request indexing for every page, the underlying issue is your site’s crawlability or content quality.

Why does Google choose a different canonical than mine?

Google treats canonical tags as suggestions. If conflicting signals exist, such as internal links pointing to non-canonical URLs, sitemaps including non-canonical versions, or redirect chains suggesting alternatives, Google may override your specified canonical.

Can too many 404 errors cause indexing problems?

Moderate 404 errors do not harm overall indexing. However, a sudden spike after migration can indicate broader technical issues. Persistent 404s on pages with significant backlinks waste accumulated link equity. Redirect these to relevant alternative pages.

Does page speed affect indexing?

Speed does not directly determine indexation, but extremely slow pages may be crawled less frequently. Server timeouts can prevent crawling entirely, which does prevent indexing. Ensure consistent server performance.

How do I get Google to re-index updated content?

Use URL Inspection to request re-indexing. Update your sitemap’s lastmod date for the page. Share updated content on social media and link to it from other high-authority pages on your site to accelerate recrawling.

Why do some pages alternate between indexed and not indexed?

Pages fluctuating are on the borderline of Google’s quality threshold. Each recrawl re-evaluates whether content merits inclusion. The solution is improving content sufficiently to consistently pass the threshold rather than remaining borderline.

Can structured data help with indexing?

Structured data does not directly influence indexation. However, it makes pages eligible for rich results, which increases Google’s incentive to maintain their indexed status because they contribute to search result diversity.

How do I handle indexing for JavaScript-heavy sites?

Google can render JavaScript but the process is deferred and resource-intensive. Implement server-side rendering or static site generation for critical pages. Use URL Inspection’s live test to verify what Google sees after rendering and address discrepancies between intended and rendered content.

What is the difference between deindexing and delisting?

Deindexing removes a page from Google’s index entirely via a noindex tag. Delisting removes it from specific results, such as through a legal removal request. URL removal in Search Console provides temporary delisting of approximately six months without permanent deindexation unless noindex is also applied.